I wrote a post recently about how query tuning isn’t just about how quickly the query runs – that if you have something (such as SSIS) that is consuming your data (and probably introducing a bottleneck), then it might be more important to have a query which focuses on getting the first bit of data out. You can read that post here.

In particular, we looked at two operators that could be used to ensure that a query returns only Distinct rows.

and

and

The Sort operator pulls in all the data, sorts it (discarding duplicates), and then pushes out the remaining rows. The Hash Match operator performs a Hashing function on each row as it comes in, and then looks to see if it’s created a Hash it’s seen before. If not, it pushes the row out. The Sort method is quicker, but has to wait until it’s gathered all the data before it can do the sort, and therefore blocks the data flow.

But that was my last post. This one’s a bit different.

This post is going to look at how Aggregate functions work, which ties nicely into this month’s T-SQL Tuesday.

This post is going to look at how Aggregate functions work, which ties nicely into this month’s T-SQL Tuesday.

I’ve frequently explained about the fact that DISTINCT and GROUP BY are essentially the same function, although DISTINCT is the poorer cousin because you have less control over it, and you can’t apply aggregate functions.

Just like the operators used for Distinct, there are different flavours of Aggregate operators – coming in blocking and non-blocking varieties. The example I like to use to explain this is a pile of playing cards.

If I’m handed a pile of cards and asked to count how many cards there are in each suit, it’s going to help if the cards are already ordered. Suppose I’m playing a game of Bridge, I can easily glance at my hand and count how many there are in each suit, because I keep the pile of cards in order. Moving from left to right, I could tell you I have four Hearts in my hand, even before I’ve got to the end. By telling you that I have four Hearts as soon as I know, I demonstrate the principle of a non-blocking operation.

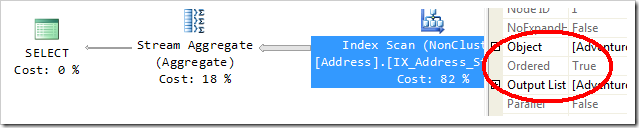

This is known as a Stream Aggregate operation. It requires input which is sorted by whichever columns the grouping is on, and it will release a row as soon as the group changes – when I encounter a Spade, I know I don’t have any more Hearts in my hand.

This is known as a Stream Aggregate operation. It requires input which is sorted by whichever columns the grouping is on, and it will release a row as soon as the group changes – when I encounter a Spade, I know I don’t have any more Hearts in my hand.

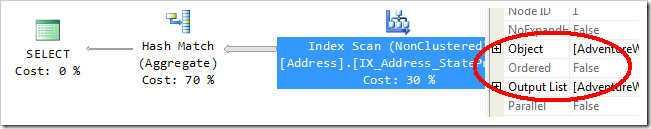

Alternatively, if the pile of cards are not sorted, I won’t know how many Hearts I have until I’ve looked through all the cards. In fact, to count them, I basically need to put them into little piles, and when I’ve finished making all those piles, I can count how many there are in each. Because I don’t know any of the final numbers until I’ve seen all the cards, this is blocking. This performs the aggregate function using a Hash Match. Observant readers will remember this from my Distinct example.

You might remember that my earlier Hash Match operation – used for Distinct Flow – wasn’t blocking. But this one is. They’re essentially doing a similar operation, applying a Hash function to some data and seeing if the set of values have been seen before, but before, it needs more information than the mere existence of a new set of values, it needs to consider how many of them there are.

A lot is dependent here on whether the data coming out of the source is sorted or not, and this is largely determined by the indexes that are being used. If you look in the Properties of an Index Scan, you’ll be able to see whether the order of the data is required by the plan. A property called Ordered will demonstrate this.

In this particular example, the second plan is significantly faster, but is dependent on having ordered data.

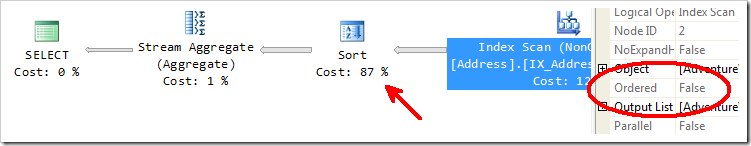

In fact, if I force a Stream Aggregate on unordered data (which I’m doing by telling it to use a different index), a Sort operation is needed, which makes my plan a lot slower.

This is all very straight-forward stuff, and information that most people are fully aware of. I’m sure you’ve all read my good friend Paul White (@sql_kiwi)’s post on how the Query Optimizer chooses which type of aggregate function to apply.

But let’s take a look at SQL Server Integration Services.

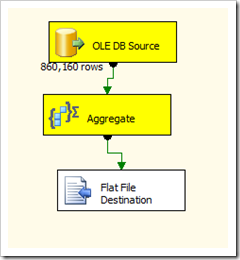

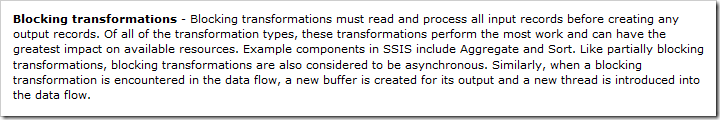

SSIS gives us a Aggregate transformation for use in Data Flow Tasks, but it’s described as Blocking. The definitive article on Performance Tuning SSIS uses Sort and Aggregate as examples of Blocking Transformations.

I’ve just shown you that Aggregate operations used by the Query Optimizer are not always blocking, but that the SSIS Aggregate component is an example of a blocking transformation. But is it always the case? After all, there are plenty of SSIS Performance Tuning talks out there that describe the value of sorted data in Data Flow Tasks, describing the IsSorted property that can be set through the Advanced Editor of your Source component.

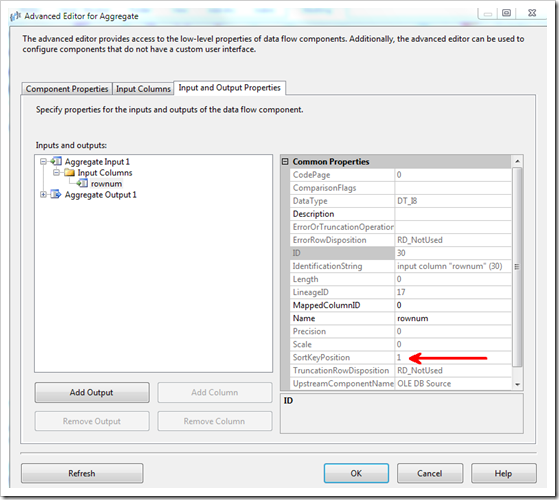

And so I set about testing the Aggregate transformation in SSIS, to prove for sure whether providing Sorted data would let the Aggregate transform behave like a Stream Aggregate. (Of course, I knew the answer already, but it helps to be able to demonstrate these things).

A query that will produce a million rows in order was in order. Let me rephrase. I used a query which produced the numbers from 1 to 1000000, in a single field, ordered. The IsSorted flag was set on the source output, with the only column as SortKey 1. Performing an Aggregate function over this (counting the number of rows per distinct number) should produce an additional column with 1 in it.

If this were being done in T-SQL, the ordered data would allow a Stream Aggregate to be used. In fact, if the Query Optimizer saw that the field had a Unique Index on it, it would be able to skip the Aggregate function completely, and just insert the value 1. This is a shortcut I wouldn’t be expecting from SSIS, but certainly the Stream behaviour would be nice.

Unfortunately, it’s not the case.

As you can see from the screenshots above, the data is pouring into the Aggregate function, and not being released until all million rows have been seen. It’s not doing a Stream Aggregate at all.

This is expected behaviour.

(I put that in bold, because I want you to realise this.)

An SSIS transformation is a piece of code that runs. It’s a physical operation. When you write T-SQL and ask for an aggregation to be done, it’s a logical operation. The physical operation is either a Stream Aggregate or a Hash Match. In SSIS, you’re telling the system that you want a generic Aggregation, that will have to work with whatever data is passed in.

I’m not saying that it wouldn’t be possible to make a sometimes-blocking aggregation component in SSIS. A Custom Component could be created which could detect whether the SortKeys columns of the input matched the Grouping columns of the Aggregation, and either call the blocking code or the non-blocking code as appropriate. One day I’ll make one of those, and publish it on my blog. I’ve done it before with a Script Component, but as Script components are single-use, I was able to handle the data knowing everything about my data flow already.

As per my previous post – there are a lot of aspects in which tuning SSIS and tuning execution plans use similar concepts. In both situations, it really helps to have a feel for what’s going on behind the scenes. Considering whether an operation is blocking or not is extremely relevant to performance, and that it’s not always obvious from the surface.

In a future post, I’ll show the impact of blocking v non-blocking and synchronous v asynchronous components in SSIS, using some of LobsterPot’s Script Components and Custom Components as examples. When I get that sorted, I’ll make a Stream Aggregate component available for download.

This Post Has 5 Comments

Something odd was going on with the links for this. It was saved as a draft on the 7th, and published on the 8th. Hopefully both links work…

Really great post, Rob! I very much like the mix of optimizer and SSIS information– I don’t tend to read up much on SSIS, but I really enjoy reading your posts.

I did get a little bit of a laugh from this sentence:

"This is all very straight-forward stuff, and information that most people are fully aware of."

You may be spending too much time with geeks, Rob. 🙂

hanks for the effort. I especially like the analogy using playing cards, it makes a complex topic clear.

Thanks for throwing your hat in the T-SQL Tuesday ring Rob! As someone who is just getting more comfortable with execution plans and the query optimizer, and has very little knowledge of SSIS, this is easy-to-understand and helpful info.

Always a pleasure, Jes. I haven’t missed a T-SQL Tuesday yet. 🙂