Let’s start with some basics and then jump in a bit deeper, for this post to go with the 40th T-SQL Tuesday, hosted this month by Jen McCown.

SQL Server holds data, and that data is stored physically in files.

Of course, in the database world we think of the data as living in tables*, so naturally there must be some sort of mapping between the data in a table and the data in a file. This is where filegroups come in.

When SQL objects are created within a database, there is an option to be able to tell the system where to actually store it. But this isn’t a file, it’s a filegroup. (If it were a file and the disk volume that the file was on filled up, we’d have big problems. Instead, we put it in a filegroup, and can add extra files (on different volumes if so desired) to that filegroup.) Objects are stored within filegroups. Filegroups are groups of files, although many database systems do end up with only a single file per filegroup.

Filegroups end up providing a large amount of flexibility for the storage of data. Rarely accessed data can be put in filegroups that have files on cheaper (but probably slower) disk, while data that is heavily written can be put on disk that’s more appropriate for that, and so on. I’m sure you get the picture, and this is nothing overly thought-worthy.

You may even have already considered the idea around partitioning data across filegroups, moving data of a certain age (but potentially even from the same table) onto a different filegroup, so that queries that use different parts of tables can benefit from having some data on faster disk. Lots of potential. 🙂

Where it becomes interesting though, is when you start to consider the concept of non-clustered indexes.

You see, while a clustered index (or heap) provides the structure to store the data across all the columns of a table (although I’m simplifying it a little in regard to LOB data), we use copies of some of that data (in non-clustered indexes) to optimise access to the data.

So where are these copies stored? Many database people don’t even think about this kind of thing, in the same way that they often don’t think about including indexes in their database design. I’m going to figure you’re not in that boat though, because I probably know you, and you’re probably not one of those people.

Most people like to have a default filegroup that is NOT the primary filegroup. It means that when they create new objects, those new objects get put in the default filegroup, not the primary one. But it’s not actually that simple.

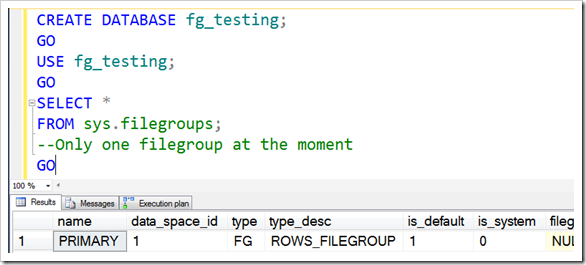

Let’s start by creating a database.

|

1 2 3 4 5 6 7 |

CREATE DATABASE fg_testing; GO USE fg_testing; GO SELECT * FROM sys.filegroups; --Only one filegroup at the moment |

Notice the column data_space_id. This is the column which identifies each filegroup. We’ll use it later.

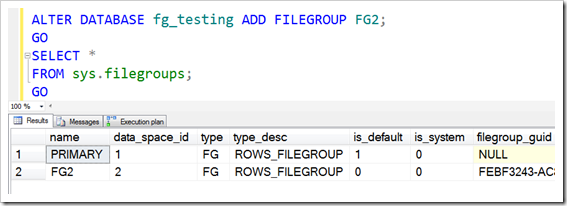

Let’s create a new filegroup and set it to be the default.

|

1 2 3 4 5 |

ALTER DATABASE fg_testing ADD FILEGROUP FG2; GO SELECT * FROM sys.filegroups; GO |

Cool – data_space_id 2 is created.

|

1 |

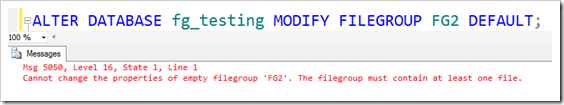

ALTER DATABASE fg_testing MODIFY FILEGROUP FG2 DEFAULT; |

I think this is a shame – but I guess it’s fair enough. We have to have a file in the filegroup before we can make it the default. That’s easy enough though, and probably what we’d be wanting to do before too long anyway.

|

1 2 3 |

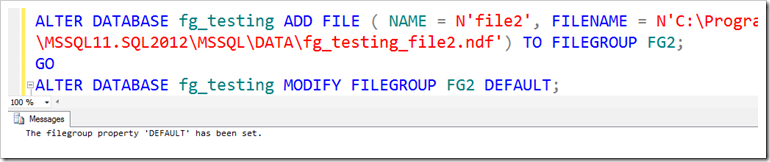

ALTER DATABASE fg_testing ADD FILE ( NAME = N'file2', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL11.SQL2012\MSSQL\DATA\fg_testing_file2.ndf') TO FILEGROUP FG2; GO ALTER DATABASE fg_testing MODIFY FILEGROUP FG2 DEFAULT; |

(I’m doing this on my laptop, which only has one physical drive – on a server it’d go somewhere more sensible of course)

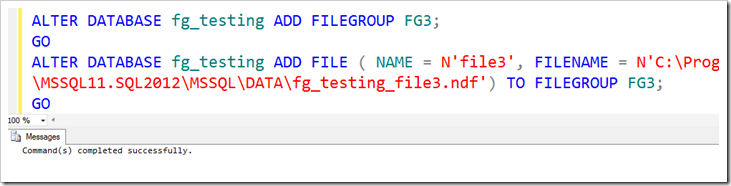

Now let’s add another filegroup. This is going to be for some data, but I don’t want it to be my default.

|

1 2 3 |

ALTER DATABASE fg_testing ADD FILEGROUP FG3; GO ALTER DATABASE fg_testing ADD FILE ( NAME = N'file3', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL11.SQL2012\MSSQL\DATA\fg_testing_file3.ndf') TO FILEGROUP FG3; |

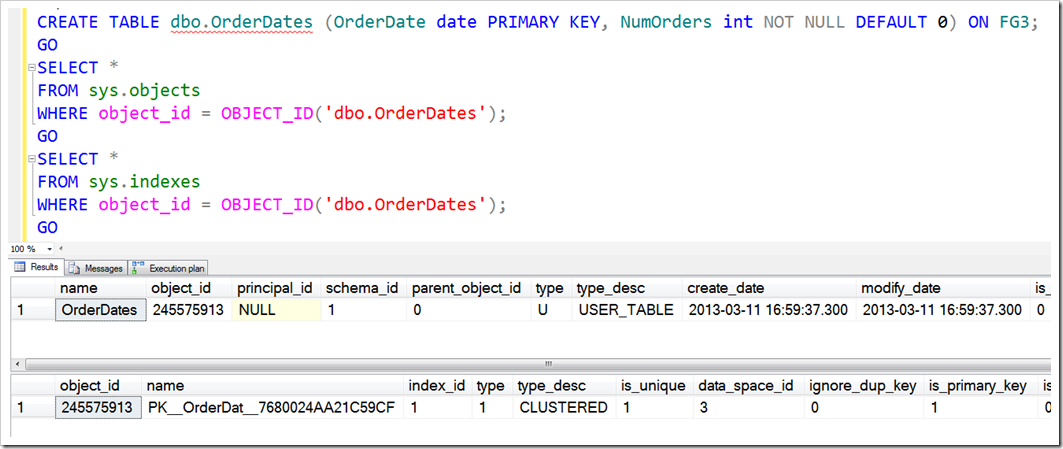

Now, I want to create an object on FG3, which I’m going to pretend is my slower disk.

Once created, I’m looking in sys.objects to confirm which filegroup the table is in, but there’s no information there. Remember that a table is only metadata, and the things that matter are the indexes/heaps that are on it. Checking in sys.indexes shows me that indeed, it’s in data_space_id 3.

|

1 2 3 4 5 6 7 8 9 10 |

CREATE TABLE dbo.OrderDates (OrderDate date PRIMARY KEY, NumOrders int NOT NULL DEFAULT 0) ON FG3; GO SELECT * FROM sys.objects WHERE object_id = OBJECT_ID('dbo.OrderDates'); GO SELECT * FROM sys.indexes WHERE object_id = OBJECT_ID('dbo.OrderDates'); GO |

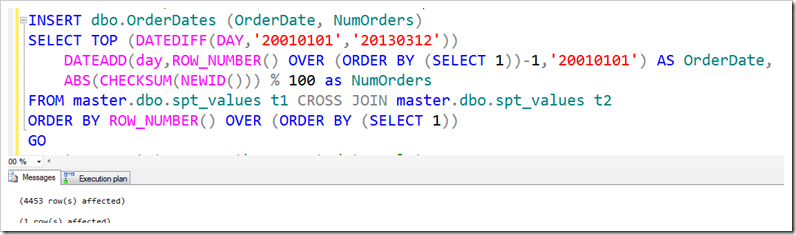

For completeness’ sake, I’m going to put some data in there, using a query that I blogged about yesterday.

|

1 2 3 4 5 6 7 |

INSERT dbo.OrderDates (OrderDate, NumOrders) SELECT TOP (DATEDIFF(DAY,'20010101','20130312')) DATEADD(day,ROW_NUMBER() OVER (ORDER BY (SELECT 1))-1,'20010101') AS OrderDate, ABS(CHECKSUM(NEWID())) % 100 as NumOrders FROM master.dbo.spt_values t1 CROSS JOIN master.dbo.spt_values t2 ORDER BY ROW_NUMBER() OVER (ORDER BY (SELECT 1)) GO |

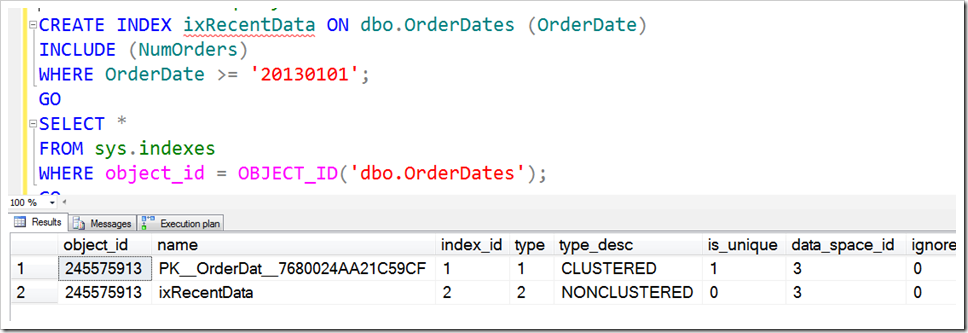

But the whole point of this was to see what happens with the non-clustered index, which I want to be on recent data only (ie, filtered), and I want it to be in the DEFAULT filegroup.

As I want it in the default group, I won’t specify a filegroup for the index.

|

1 2 3 4 5 6 7 |

CREATE INDEX ixRecentData ON dbo.OrderDates (OrderDate) INCLUDE (NumOrders) WHERE OrderDate >= '20130101'; GO SELECT * FROM sys.indexes WHERE object_id = OBJECT_ID('dbo.OrderDates'); |

But look! It’s put the non-clustered index in the same filegroup as the clustered index. This isn’t what I wanted. In fact, it’s almost never what I’d want, because even if the disk performance is identical, it can be nice to have transactions which update both the clustered and non-clustered indexes using different disks.

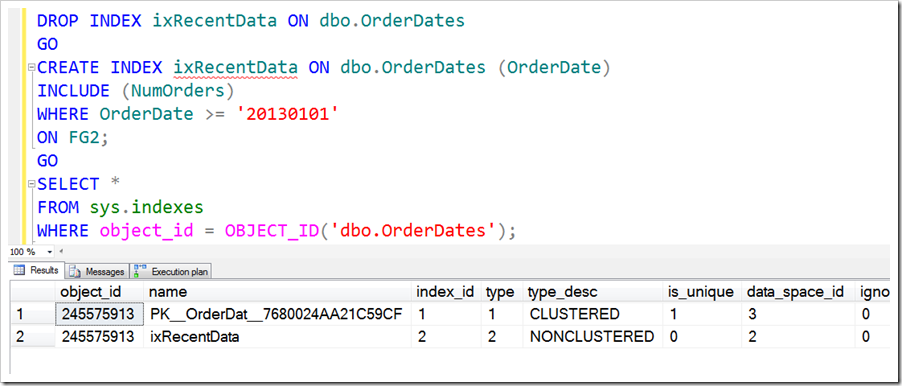

Instead, we have to specify it explicitly to tell it to use the filegroup we want.

|

1 2 3 4 5 6 7 8 9 10 |

DROP INDEX ixRecentData ON dbo.OrderDates GO CREATE INDEX ixRecentData ON dbo.OrderDates (OrderDate) INCLUDE (NumOrders) WHERE OrderDate >= '20130101' ON FG2; GO SELECT * FROM sys.indexes WHERE object_id = OBJECT_ID('dbo.OrderDates'); |

It’s a shame to have to be so explicit with these things, but when you create non-clustered indexes, you really should think about which filegroup they’re going onto, because they may well not go onto the default one as you might expect.

This Post Has 9 Comments

Also, even append-only workloads might fragment a table and its index to 100% if both are on the same filegroup (even if nothing else is on that filegroup). They might get every other extent allocated. Using a FG per partition solves that (and AFAIK is the *only* thing that solves it proactively).

SQL Servers allocation algorithms are really awful and I have already complained about it on connect.

Yeah, there are a bunch of reasons to think carefully about index storage. I really think they deserve to be considered more carefully that they typically are.

Hi, Rob, good post. Can you explain more about "it can be nice to have transactions which update both the clustered and non-clustered indexes using different disks", better with a quick example? Just a few sentences. Thank you.

Well, if you have some "write this" commands, it can be better to involve multiple disk controllers, rather than having it all go through the one.

Hi Rob…

As I understand it, the DEFAULT filegroup only comes into play when you do a CREATE TABLE. In other words, if no ON clause is specified with the CREATE TABLE command, it’s created on the DEFAULT filegroup. However, when you do a CREATE INDEX (without an ON clause), the index is created on the same filegroup as the table that’s being indexed… not the DEFAULT one.

I guess that makes sense… even though, as you say, it’s "almost never what [you] want."

(Love your date-population query, by the way)

–Brad

Hey Rob, do you normally still go to the trouble of putting indexes in a separate filegroup on a separate disk when the disks presented to the server are all part of the same RAID array? Cheers.

Hi Andrew,

When the volumes are all part of the same drive, I do find myself wondering if it’s worth separating them out or not. Generally, multiple volumes that map to the same physical drive array are there for one of two reasons. One is ignorance, but the other is that it’s in anticipation of the configuration being used on a different server. I frequently see Dev/Play environments where the volumes are all on the same physical disk(s), but that the plan is to deploy to a machine where they are separate. So I don’t want to say "Yes, if it’s all part of the same RAID array, don’t bother separating it", but do make a conscious decision about what you want to do and why you want to go down that path.

Rob

Rob,

We already have everything on a single filegroup for a small business standard edition of SQL Server 2008 R2 database. Database size is 30 GB approximately.

We are going to move the already existing SQL Server 2012 Standard Edition DB server to the new cloud environment. We are asked to suggest the partition of the disk storage for SQL Server DB Server. We allot one for MDF, 2nd one for LDF, Third one for Backups and the Fourth one for TempDB. Our Technical Director insists us to create another drive for Indexes voluntarily. Allotting a separate drive for Indexes is a good move?

If not in which situation we can do that. If yes, will this improve the performance for this much of small database?

Thanks & regards,

Vijay

Hi Vijay,

I wouldn’t worry. Separating indexes off is far less important than the things you’ve suggested around having separate disks for data files, log files, and tempdb. You could put your backups across the network even (if it’s fast enough). The biggest thing to consider here is that MDFs and LDFs and Tempdb have different styles of disk activity, and should use disk that’s configured to cater for that particular style.

If it’s easy to seperate stuff out even more, then maybe indexes, but it’s not a huge priority.

Rob